GitHub Chose the Token Meter. China Chose the Data Plan

Agent workloads broke subscription pricing on both sides of the Pacific. GitHub exposed the token cost. China wrapped it in a mobile-style bundle.

Since GitHub Copilot launched in mid-2022, subscribers have paid a flat monthly fee and used AI coding assistance without thinking about what each interaction cost. That arrangement ends on June 1.

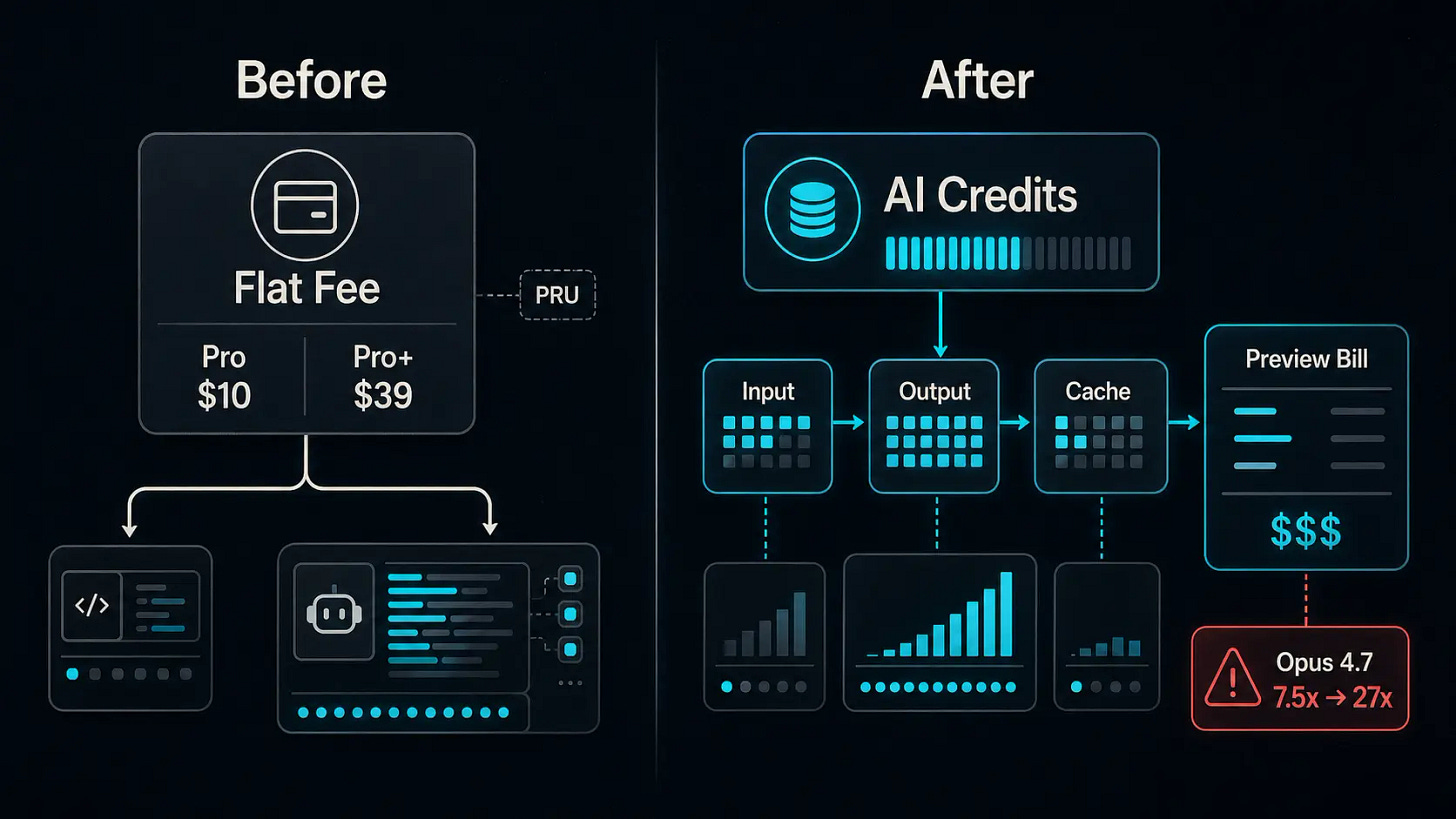

GitHub announced this week that every Copilot plan will transition to usage-based billing. A new virtual currency called GitHub AI Credits replaces premium request units. Each credit is worth $0.01. Copilot Pro at $10 per month includes $10 in credits. Pro+ at $39 includes $39. How quickly those credits deplete depends on which model a developer selects and how large each session’s context grows.

The subscription prices look unchanged. What they buy has not. Under the old model, a quick chat question and an agent session running for hours could cost a user the same amount. Under the new model, the gap between those two interactions shows up on the bill. Annual subscribers face a sharper adjustment: cost multipliers for premium models jump on June 1. Opus 4.7, currently at a 7.5x multiplier per request, rises to 27x.

Code completions and Next Edit Suggestions remain included at no credit cost, which matters for developers whose primary use stays within the editor. But anyone running Copilot’s chat, agent mode, or code review features now faces a visible price tag tied to the model and context each session consumes. To ease the transition, GitHub is launching a preview bill in early May that projects costs before the new system takes effect. Business and Enterprise customers receive promotional credit pools for June through August, with usage pooled across the organization rather than locked to individual seats.

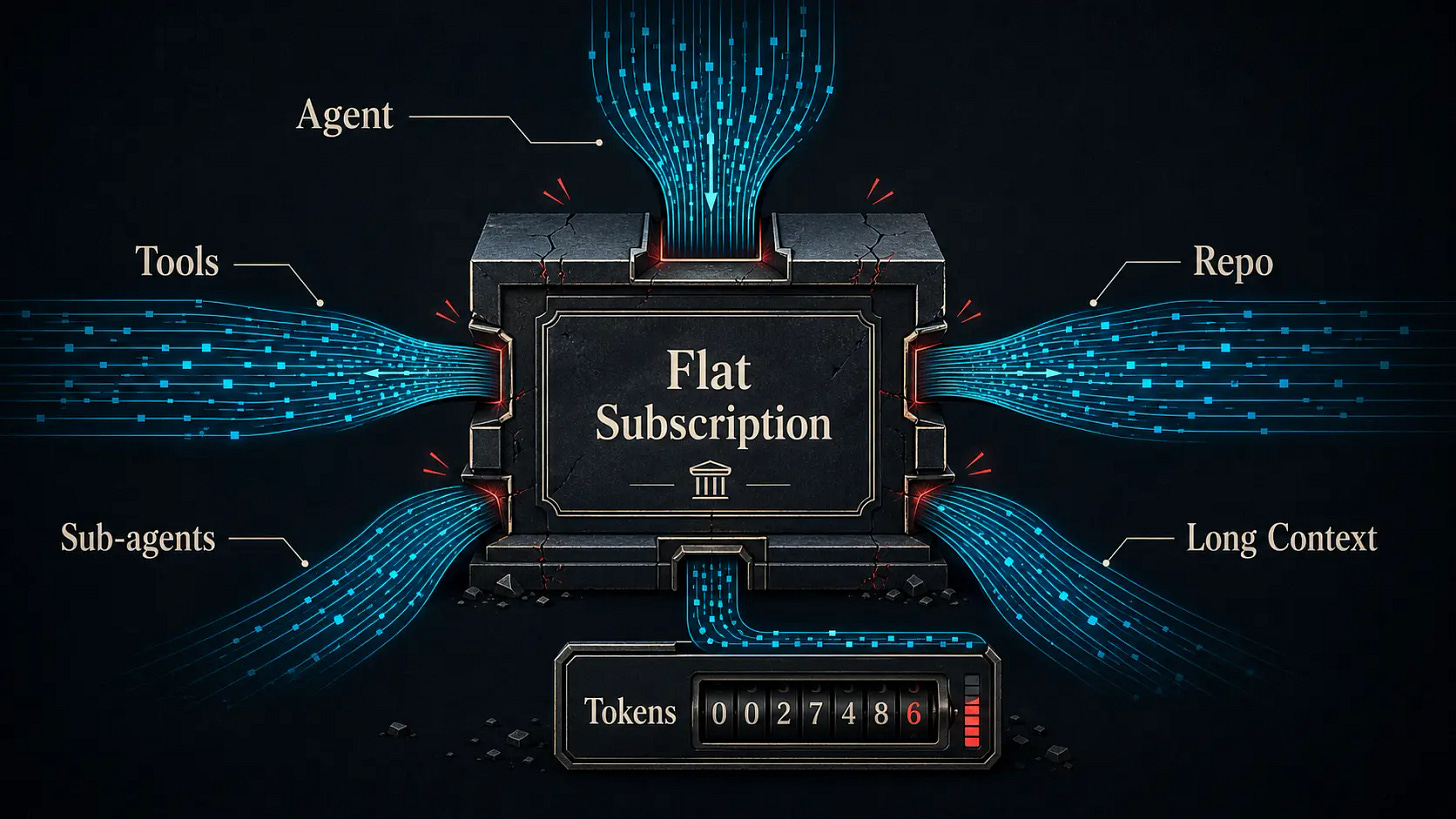

GitHub’s chief product officer framed the shift in structural terms. Copilot “has evolved from an in-editor assistant into an agentic platform.” The premium request, a billing unit built for the chat era, could no longer represent the cost of what Copilot had become. In effect, GitHub acknowledged that its AI coding tool was not a software subscription. It was a compute service with a subscription wrapper, and the wrapper had to go.

When Agents Broke the Subscription

GitHub reached this point alongside most of the industry.

Anthropic cut off third-party agent frameworks from routing API calls through its consumer subscriptions earlier this month. OpenAI debuted a $100 subscription tier to boost usage of its Codex model. Cursor moved from fixed request quotas to a credit pool weighted by model and task complexity. Google tightened usage policies for Gemini CLI. Each arrived at its own version of the same conclusion: flat-rate pricing absorbs light, predictable workloads well enough. It falters with agent-scale computation, where a single task can spawn sub-agents, traverse entire repositories, and consume millions of tokens over hours.

The OpenClaw surge made the hidden subsidy harder to ignore. When the open-source agent framework went viral earlier this year, developers began running continuous coding sessions through subscription plans designed for intermittent use. The usage distribution that made flat rates viable shifted hard toward the heavy end. The Register’s Thomas Claburn compared the situation to Red Lobster’s Endless Shrimp promotion, which famously helped push the restaurant chain into bankruptcy.

The structural pressure runs deeper. Gartner estimates that cumulative capital investment in AI data centers will approach $6.3 trillion between 2024 and 2029. To clear even a minimal return on that build-out, model providers would need to generate close to $7 trillion in cumulative AI-driven revenue across the same period. Room for subsidized inference is narrowing, and the companies absorbing the heaviest losses from agent workloads are moving first.

GitHub’s response: expose the token cost and let users manage it.

Three Layers, One Market

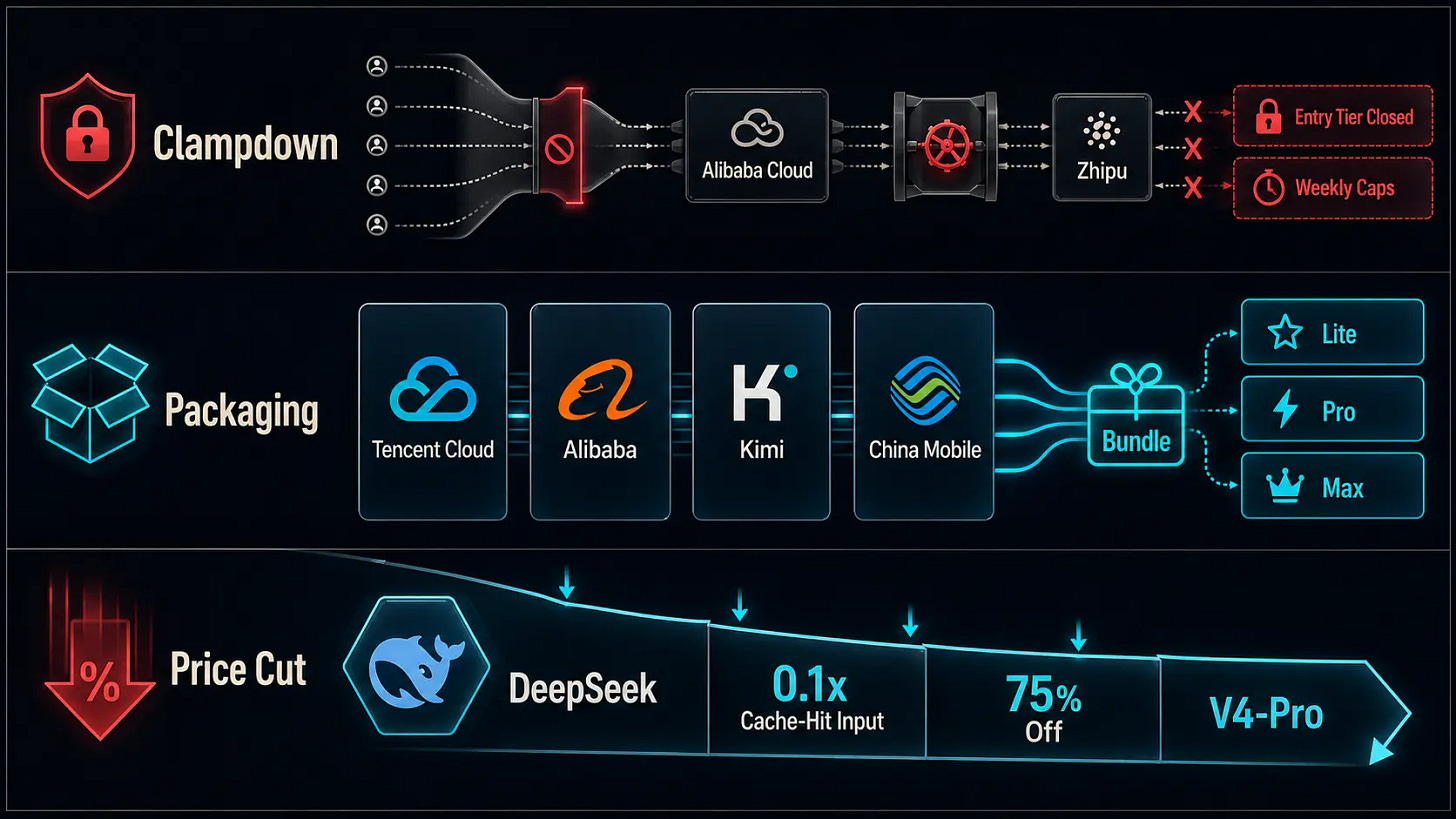

Over the same month, Chinese AI platforms confronted the same pressure from agent workloads. Their responses look different from GitHub’s single billing switch, and from each other. But the split is telling: application platforms are tightening access, cloud providers are repackaging usage into consumer-friendly bundles, telecom operators are distributing token packages through mobile channels, and infrastructure players such as DeepSeek are still cutting prices to pull workloads toward their stack.

The first layer is a clampdown. Alibaba Cloud stopped accepting new sign-ups for its entry-level coding plan in March and ended renewals in April, leaving only a ¥200-per-month (about $28) Pro plan available through a daily limited-quantity drop at 9:30 AM. According to developer reports, slots typically vanish within minutes. Zhipu, the company behind the GLM model family, is shutting down auto-renewal for its older coding subscriptions without weekly caps on April 30. Replacement plans introduce tighter periodic quotas. The logic parallels GitHub’s: low-price tiers cannot absorb the inference demands that agent frameworks generate.

The second layer is packaging. Where GitHub chose to surface token costs directly, several Chinese platforms chose to embed usage-based economics inside a consumer-friendly shell. Tencent Cloud’s Hy Token Planis the clearest example. Explicitly positioned for agent workloads, it packages access to Tencent’s latest models with tiered monthly credit pools compatible with OpenClaw, Claude Code, Cursor, and other popular frameworks. Users pick Lite, Standard, Pro, or Max. They do not see a per-token invoice. Alibaba introduced a parallel team-tier Token Plan that unifies text and image model consumption under a single credits system.Moonshot folded Kimi Code into its broader membership system alongside document generation, slides, and agent capabilities, starting at ¥39 per month (about $5), with no token pricing visible to the user. China Mobile’s provincial branches in Jiangsu, Beijing, and Guangdong began offering AI token packages this month, with one regional plan providing 20 million tokens for coding use and another starting at ¥5.99 (under $1) per session pack. Tokens are migrating from developer platforms toward telecom distribution channels, extending a pattern already visible in China’s broader token economy. The underlying math across these products resembles GitHub’s AI Credits. The user-facing design looks closer to a mobile phone plan: pick a tier, use until the quota resets.

The third layer runs in the opposite direction. While application-layer platforms tightened quotas or raised effective prices, DeepSeek cut. On April 26, it reduced cache-hit input pricing across its entire model lineup to one-tenth of launch rates and extended a 75% discount on V4-Pro through May 31. Lower per-token costs make long-running, cache-heavy agent sessions cheaper to operate, pulling developer workloads toward DeepSeek’s infrastructure regardless of which frontend tool sits on top. China is not moving in one direction. Platforms that sell to developers are tightening. The infrastructure layer underneath them is still competing on price.

The Meter vs. The Bundle

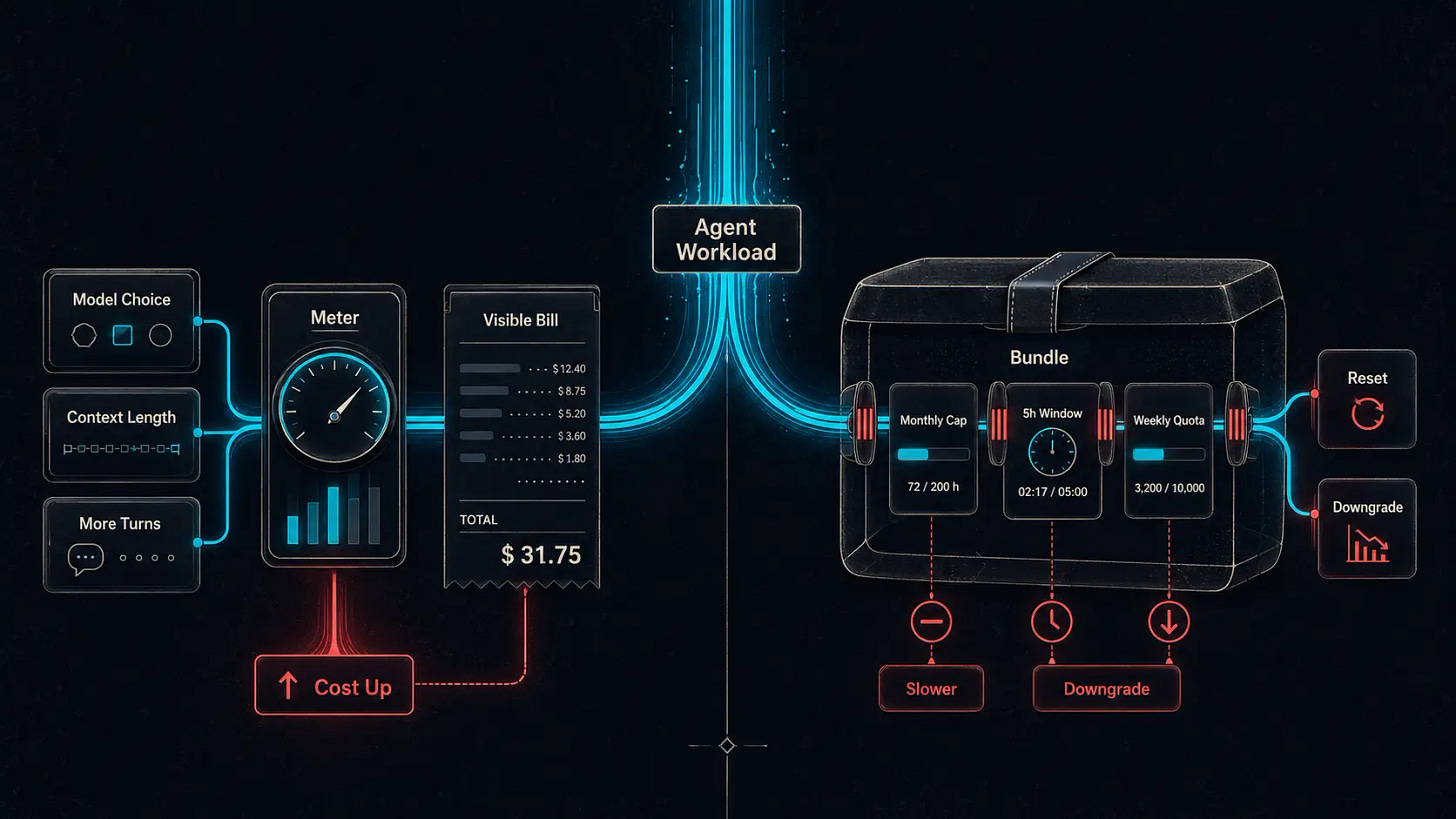

Beneath the surface-level packaging difference lies a structural question: who absorbs the cost uncertainty that agent workloads create?

GitHub’s model shifts that uncertainty onto the developer. A $10 monthly credit pool buys a variable amount of work depending on model choice, context length, and how many turns an agent needs to complete a task. Developers noticed quickly. “Your ‘usage-based billing’ will make it significantly harder for you to predict your usage,” one commenter wrote in the community thread. Another asked a question that several echoed: “If I have to pay per token, where’s the advantage compared to using the API of my favorite models directly?” Under this framing, Copilot’s remaining competitive edge compresses to a few specific areas: the VS Code integration, the GitHub workflow features, and the frictionless procurement path for enterprise teams already operating inside the Microsoft ecosystem.

The bet GitHub is placing: visible costs will push both users and framework developers to reduce wasted tokens, improving efficiency across the ecosystem over time.

Chinese platforms have largely chosen to keep that uncertainty off the invoice and move it into product constraints. Users encounter monthly caps, five-hour rate windows, and weekly quotas rather than per-token bills. Users feel less exposed to price risk. But cost pressure still has to go somewhere. In practice, it tends to surface as slower inference during peak hours, lower-quality model outputs under heavy load, and hard quota resets rather than as a surprising invoice. The wrapper changes how the friction feels. The underlying economics remain.

GitHub also quietly altered the value exchange in another direction during the same week. On April 24, it updated its data policy: interaction data from Free, Pro, and Pro+ users, including inputs, outputs, and code context, will feed GitHub’s model training by default unless users opt out. Business and Enterprise customers are not affected. For individual subscribers, this adds a separate layer to the transaction. They pay for tokens. Unless they opt out, their interaction data can also improve the models behind those tokens.

What Neither Path Has Proven

GitHub and Chinese platforms agree on the diagnosis. Agent workloads make AI coding subscriptions economically unstable. Per-session costs are high, variable, and growing. The subsidy that made flat-rate plans viable for chat-era usage cannot stretch to cover agentic computation.

They disagree on the response. GitHub bet on transparency, exposing token costs and trusting market pressure to drive efficiency. Chinese platforms broadly bet on packaging, translating cost variance into familiar consumer formats and managing the strain through rate limits and quota structures rather than user-facing bills. Agentic coding is turning software subscriptions into something closer to compute markets, but China is trying to preserve the consumer psychology of subscriptions even as the underlying economics shift.

Neither has demonstrated what both sides need: a billing model that makes agentic coding sustainable for the platform while remaining affordable and predictable enough that developers use it daily without hesitation.

China’s experiment may be the more revealing one. Cloud providers, model companies, telecom operators, and consumer app platforms are running distinct pricing strategies simultaneously, while DeepSeek’s aggressive discounting applies competitive pressure from the infrastructure layer. The pricing question GitHub just resolved with a single billing switch, Chinese platforms are answering in at least five different ways at once. How those experiments play out over the coming months may tell us more about the long-term economics of AI coding tools than GitHub’s billing switch alone.