The Token Reckoning

China’s AI coding plans sell out within minutes. The economics have never closed.

Last week, Anthropic cut off third-party tools from routing API calls through Claude Pro and Max subscriptions. The decision triggered immediate backlash from developers who had built workflows around that access. Most commentary framed it as a platform defending revenue.

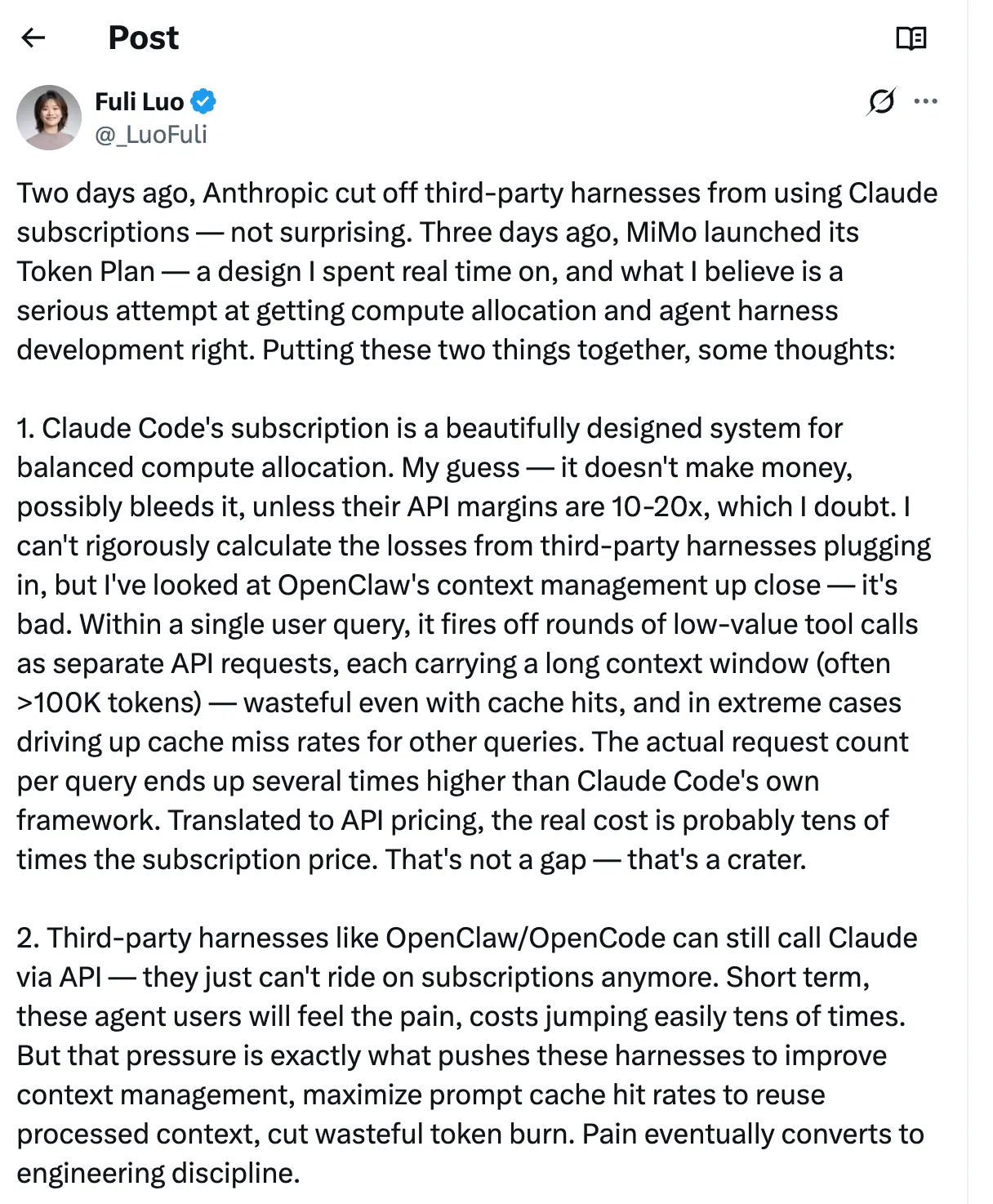

Luo Fuli read the move differently. Luo, who leads Xiaomi’s MiMo large model team and previously worked at DeepSeek, posted a detailed thread that rapidly circulated across Chinese tech circles. The crackdown was “not surprising,” she wrote. What followed was a structural indictment of how the entire industry prices AI coding, and a warning that selling tokens dirt cheap while leaving the door wide open to third-party harnesses “looks nice to users, but it’s a trap.”

Her timing was deliberate. Across China, AI coding subscriptions have become among the most sought-after products in tech. Across major Chinese cloud platforms, limited-quantity coding plans often disappear within minutes when new slots open. Developers set morning alarms and write auto-purchase scripts to secure monthly access.

The demand is real. The underlying economics point in the opposite direction. Subscription pricing, built for an era when each AI interaction consumed a few hundred tokens, is now absorbing agent workloads that consume 10 to 100 times more per task. China’s coding plan market may be the clearest visible test of what happens when that collision goes unaddressed.

When Every User Becomes a Power User

Luo’s analysis begins with the mechanics of flat-rate pricing. A fixed monthly subscription assumes a distribution of usage. Light users subsidize heavy ones. Averages hold, and the business survives. Gyms, streaming services, and cellular data plans all depend on this balance.

Anthropic built Claude Code’s subscription on that logic. Its native framework manages context carefully, maximizes prompt cache reuse, and consolidates tool calls to keep per-query costs within bounds. Within that controlled environment, the cross-subsidy between light and heavy users can work.

Third-party agent frameworks shattered the distribution from both ends. “I’ve looked at OpenClaw’s context management up close,” Luo wrote. “It’s bad.” Within a single user query, the harness fires off rounds of low-value tool calls as separate API requests, each carrying a long context window, often exceeding 100,000 tokens. The actual request count per query runs several times higher than what Claude Code’s own framework would generate. Converted to API rates, the real cost reaches tens of times the subscription price. In Luo’s assessment: “That’s not a gap. That’s a crater.”

A second problem compounded the damage. Claude’s caching system depends on consistent prefixes in conversation context. When the same token sequence opens successive requests, the system reuses prior computation and skips redundant processing. As Luo noted, “many third-party harnesses compress tool responses every 3 steps when approaching the context limit, leading to very low cache hit rates.” Each compression rewrites the prefix, and the model is forced to reprocess the full context window from scratch. Cached work becomes wasted work.

The combined effect pushed every query toward maximum cost. Users of third-party harnesses generated requests with the cost profile of power users, regardless of their individual usage intensity. The statistical distribution that made subscriptions viable simply collapsed. Anthropic absorbed the gap.

One striking case illustrates the scale. A Claude Max subscriber paying $100 per month generated over $5,600 in equivalent API costs in a single billing cycle. A subsidy ratio of 25 to 1. That sits at the extreme end, but the structural pattern held broadly: pricing designed for chat-era consumption funded agent-scale computation.

Luo framed the crackdown as the only rational outcome. Third-party tools retain access to Claude through standard API billing. They lost the ability to ride the subscription. Short term, she acknowledged, costs for agent users will jump easily by tens of times. “But that pressure is exactly what pushes these harnesses to improve context management, maximize prompt cache hit rates to reuse processed context, cut wasteful token burn. Pain eventually converts to engineering discipline.”

China’s coding plan market is deep in the same trap. Alibaba’s Pro tier sells out by 9:30 AM each morning. Tencent’s promotional slots show as perpetually unavailable. Beneath the scarcity, throttled speeds, degraded models, and quota walls suggest a product category under severe cost pressure. What follows examines why a Xiaomi executive’s proposed fix drew fierce resistance, and what the shift from token price to token efficiency means for China’s AI coding market.

[Continue reading with a paid subscription]