When ChatGPT Health Became “America’s Afu”

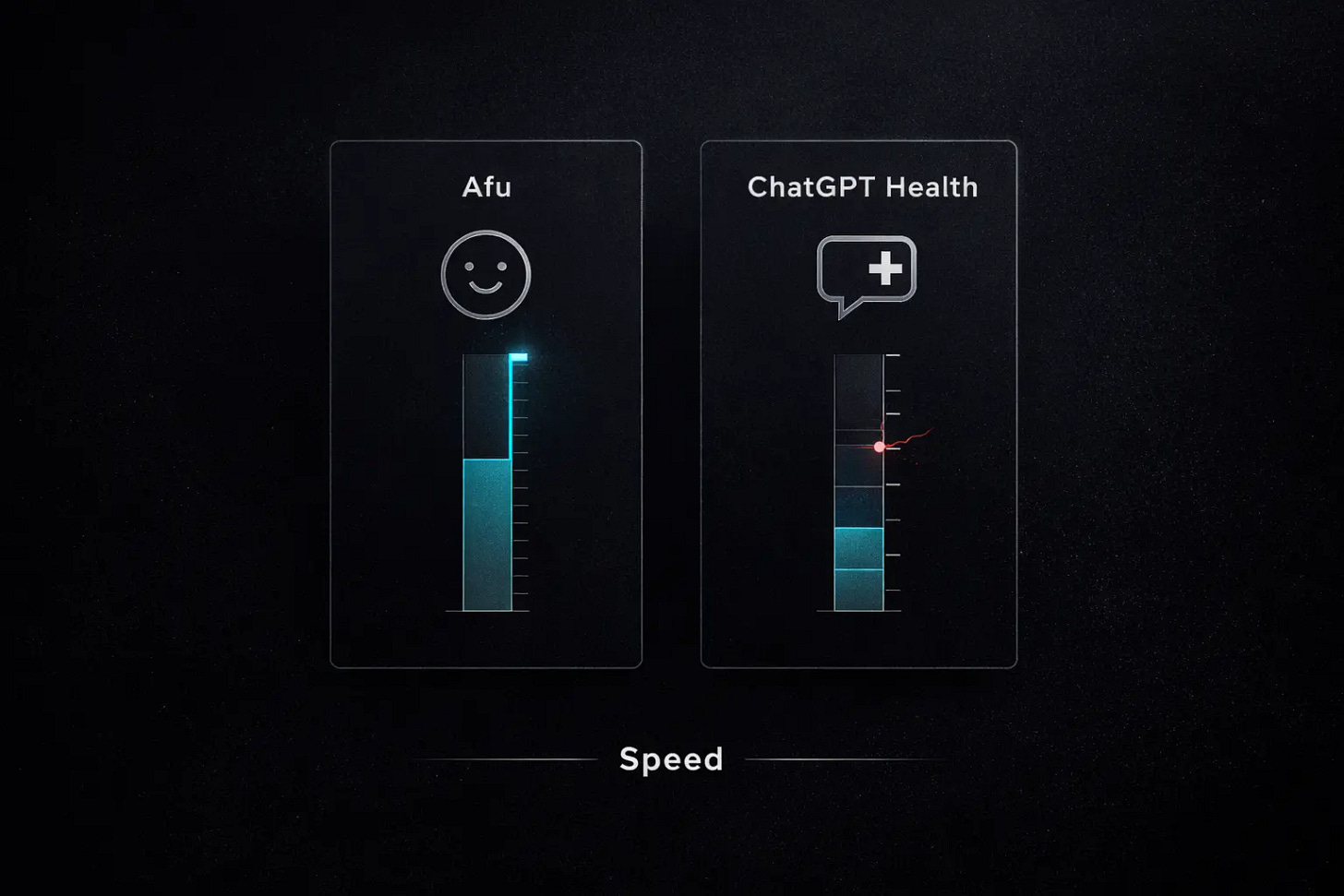

Afu hit 30M MAU as ChatGPT Health launched. Same tech, two systems: speed versus trust.

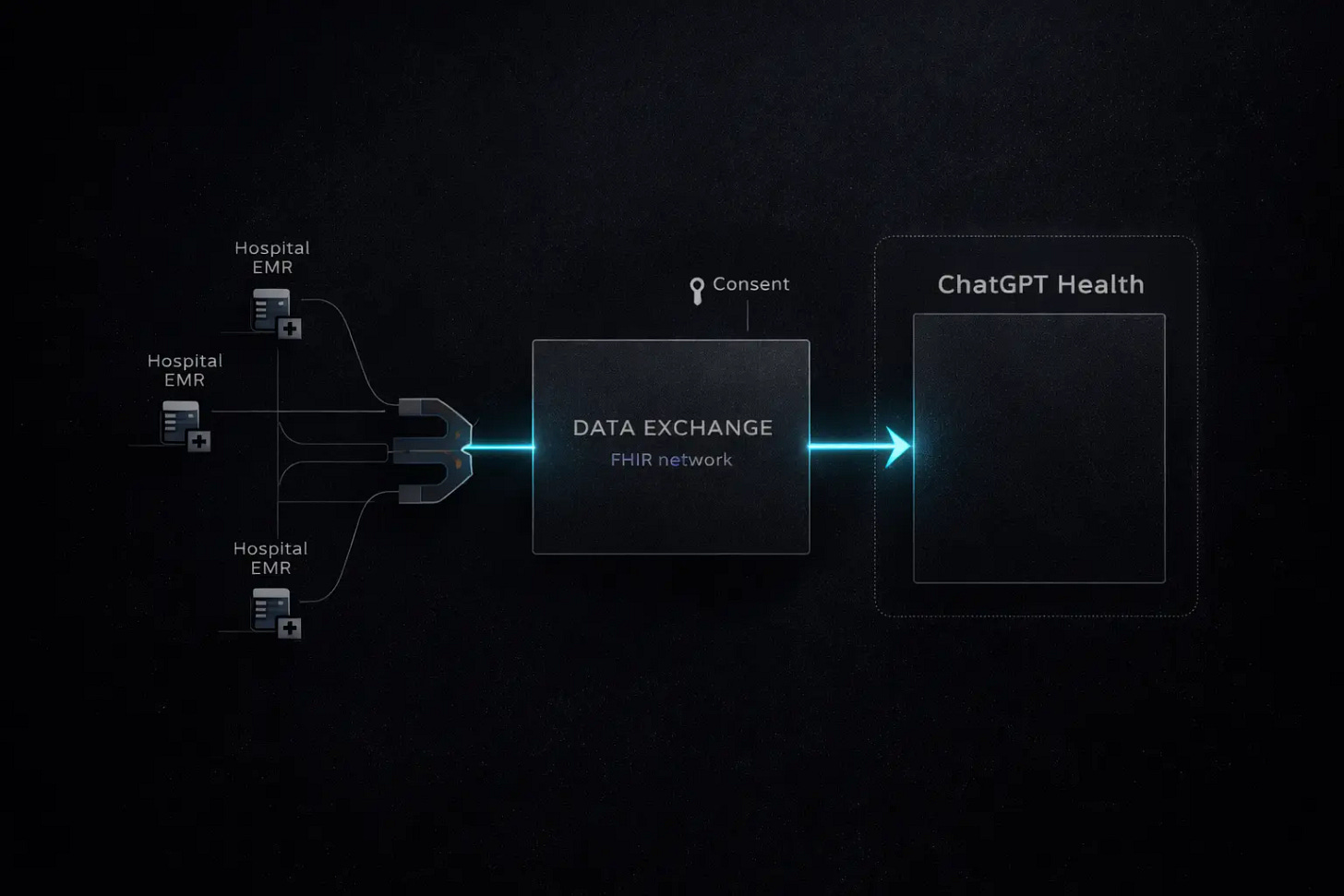

In January 2026, OpenAI launched ChatGPT Health. A dedicated sidebar. Integration with Apple Health. Access to medical records through b.well, a health data network built on the FHIR standard. The American tech press treated it as a milestone.

Chinese social media had a different reaction. The dominant comment: “So this is the American version of Afu.”

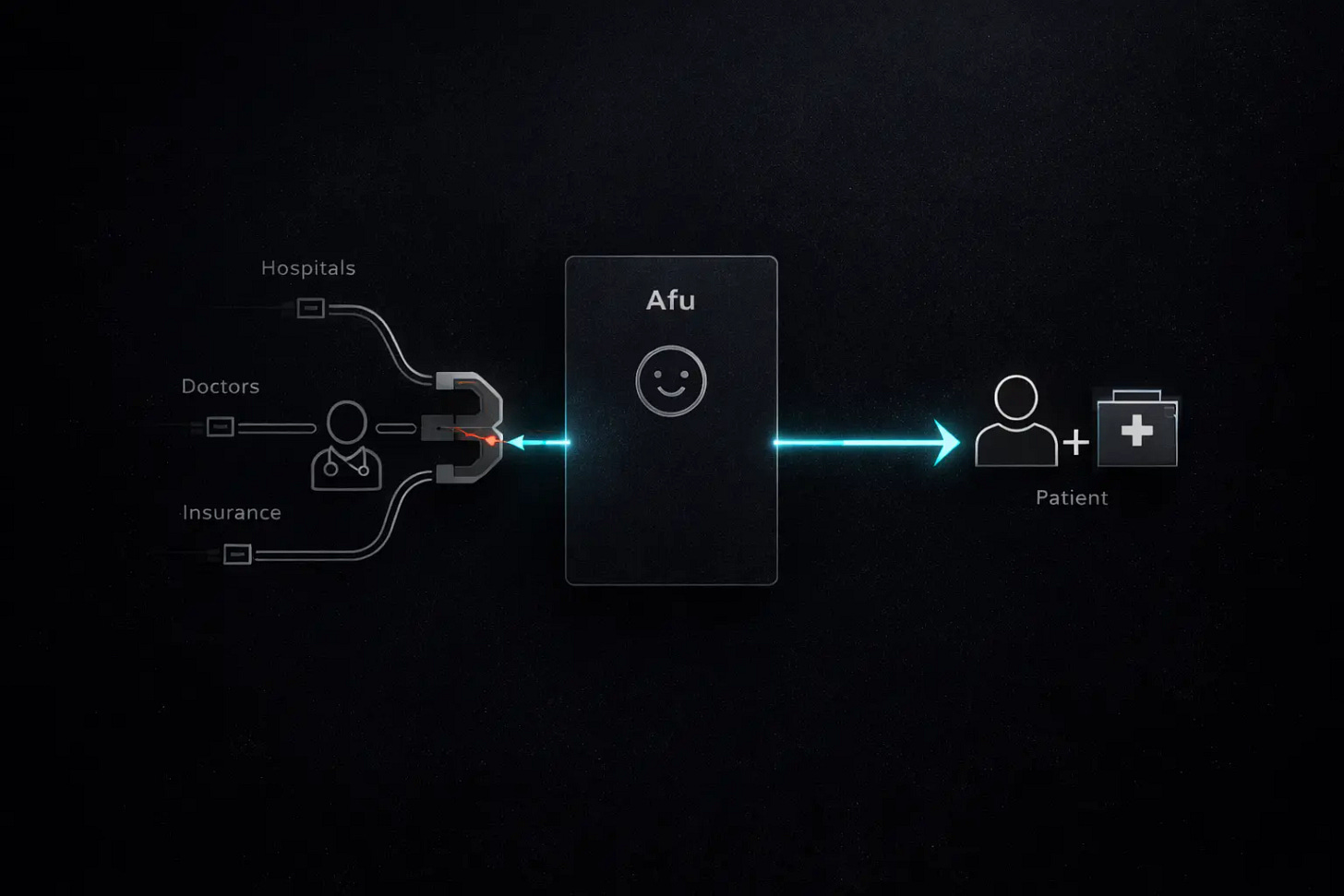

“Afu” is Ant Group’s AI health assistant. It had rebranded just weeks earlier, in mid-December 2025, and was already serving 15 million monthly active users when ChatGPT Health went live. Within days the figure doubled to 30 million. Afu was connected to 5,000 hospitals across China and had built a direct pipeline to 300,000 licensed physicians. The name — chosen personally by Jack Ma — is designed to sound like a trusted neighbor. Not a medical device. Not a clinical tool. A friend who happens to know a lot about health.

The comparison resonated because it was directionally accurate. A Chinese consumer AI product had been operating at vertical scale before its closest American counterpart even launched. The usual narrative — Silicon Valley innovates, China follows — did not apply.

But the “American Afu” framing obscures a deeper divergence. Both countries are experiencing the same AI healthcare explosion. They are having entirely different conversations about it.

China: The Speed Game

China’s AI health market is in full sprint. The competitive intensity resembles the ride-hailing wars of 2015 or the community group-buying battles of 2020. Multiple well-capitalized players are throwing resources at the same opportunity. The race rewards speed over caution.

Ant Group sets the pace. After acquiring Haodf — China’s largest physician-patient platform, home to hundreds of thousands of registered doctors — in early 2025, the company relaunched its health product under the Afu brand in December. What followed was a marketing campaign that would be unrecognizable to anyone in Western digital health. Street teams stationed at shopping malls. QR codes plastered in public restrooms. Painted slogans on rural village walls. Promoters on social media openly advertised payouts of up to 10 RMB per successful signup. Monthly active users doubled from 15 million to 30 million in four weeks.

More than half of Afu’s users come from cities below the third tier. This is the number that matters most. In China’s smaller cities and rural counties, seeing a specialist can mean a full day of travel. Afu offers these users something they never had: instant access to a medical knowledge base connected to real physician networks, tied to their national health insurance through Alipay. Free. Twenty-four hours a day.

Ant is not alone. Baidu embedded its “Wenxin Health Manager” into the search ecosystem, positioning AI as a smarter front door for medical information. JD Health launched “Kangkang,” linking AI consultations directly to its pharmaceutical logistics — ask a question, get a recommendation, have the medicine delivered within hours. ByteDance deployed “Xiaohe AI Doctor” as a mini-program inside Douyin. Tencent is building from a layer below, focusing on hospital IT infrastructure and medical imaging rather than consumer-facing products.

At least ten major companies have shipped standalone AI health products in China. The market has split into two lanes. One lane serves patients: health companions that answer questions, interpret lab reports, track fitness data, and book appointments. The other lane serves physicians: clinical decision support, automated documentation, evidence-based reasoning tools. The patient lane is louder. The physician lane may prove more durable.

The underlying force is structural. China’s healthcare system covers 1.4 billion people with roughly five million licensed physicians. The headline ratio is not catastrophic by global standards. The distribution is. AI health assistants address this gap in a way that previous internet health platforms — which mostly devolved into online pharmacies — never managed. The cost of a medical interaction dropped to zero. Usage followed.

America: The Caution Game

The American AI healthcare conversation operates in a different register. Where Chinese media covers market share battles and user acquisition, US coverage fixates on risk, liability, and early warning signs.

OpenAI actually shipped two distinct products in January. ChatGPT Health, the consumer feature, gives everyday users a dedicated sidebar for health queries. Data lives in a separate silo from regular conversations. The system maintains an independent memory that never bleeds into other contexts. OpenAI pledged explicitly that health conversations would not be used to train its foundation models. The architecture solves a trust problem before solving a medical one — but it is not a clinical product and does not fall under HIPAA.

The second product, OpenAI for Healthcare, does. This is the enterprise version aimed at clinicians: HIPAA-compliant, designed to help physicians reason through medical cases, and already rolling out to institutions including Boston Children’s Hospital, Memorial Sloan Kettering and Cedars-Sinai.

The two-track launch reflects the environment. The US healthcare system is among the most heavily litigated on earth. Institutional partners need contractual certainty that patient data stays ring-fenced. Consumer users need to feel their records will not resurface in an unrelated chat session.

Anthropic, whose CEO trained as a biophysicist, takes a parallel approach. Claude is being positioned for clinical workflows through dedicated enterprise healthcare events. The playbook: earn institutional trust first, then scale.

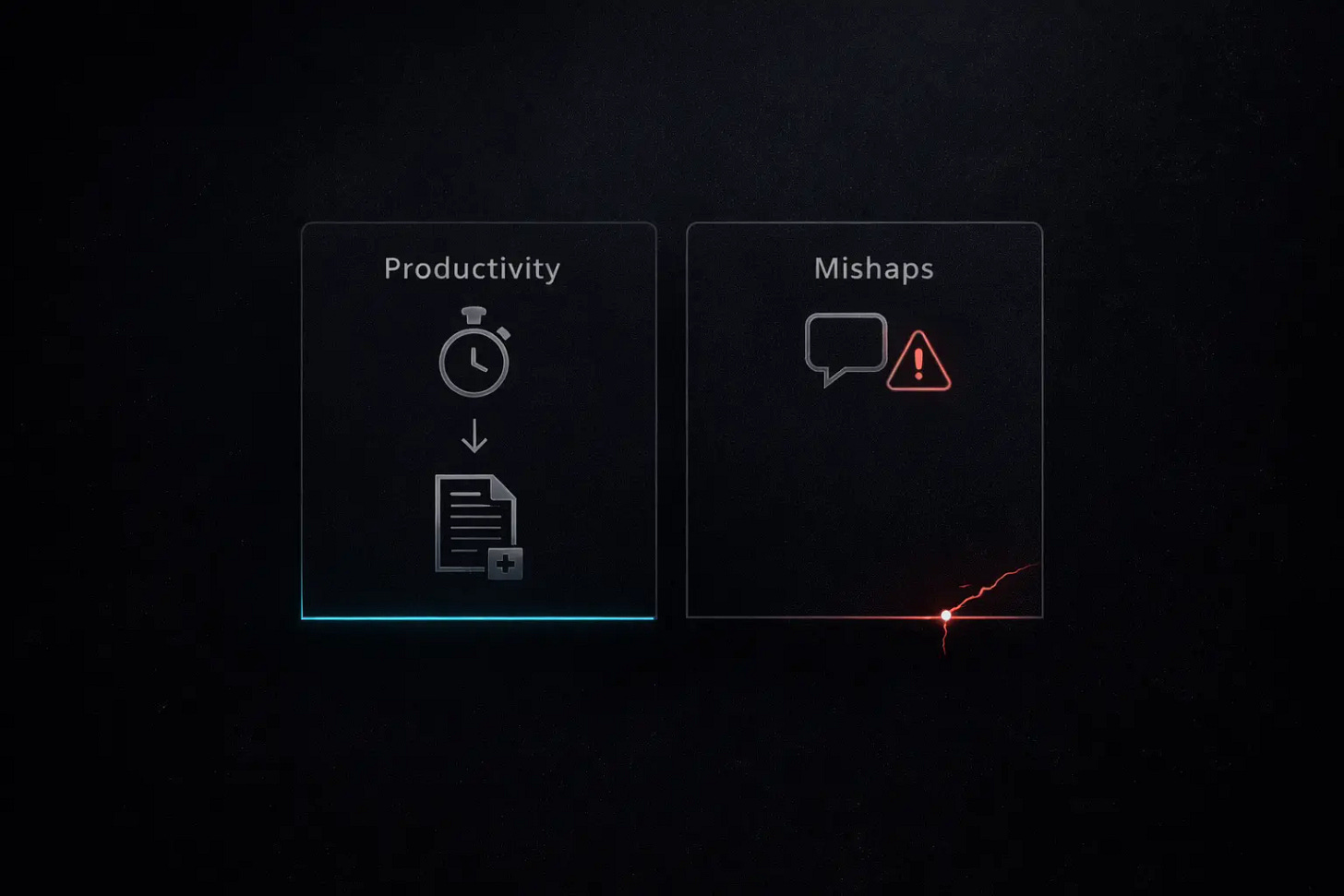

Meanwhile, American journalists and physicians have been stress-testing these tools. The results are sobering.

A Washington Post columnist connected years of Apple Watch data — millions of heartbeats, tens of millions of steps — to ChatGPT Health. The system gave him an F for cardiac fitness. His actual cardiologist put him at low risk. Eric Topol, one of America’s most cited digital health researchers, reviewed the output and dismissed it as groundless. When the test was repeated, scores swung between F and B with no change in underlying data. The system could not consistently remember the user’s age or gender between sessions.

A Wall Street Journal investigation found that 27 percent of US health systems now pay for commercial AI licenses — triple the economy-wide average. But deployments expose real problems. At one hospital, an AI patient messaging system told a person requesting a walker that it “couldn’t help.” It told a patient with headaches they might have a brain tumor. A study in The Lancet found that physicians who used AI-assisted colonoscopy tools for three months detected fewer abnormalities once the tool was removed. The AI did not just supplement human skill. It displaced it.

The darkest findings came from the New York Times.More than 100 therapists and psychiatrists described cases where extended chatbot interactions triggered psychotic episodes and suicidal thinking. OpenAI’s own internal estimates suggest that roughly 1.2 million users per month discuss suicidal intentions with ChatGPT, while around 560,000 exhibit signs of psychosis or mania during conversations. Safety guardrails, the investigation found, degrade during prolonged multi-day interactions — precisely the pattern most common among vulnerable users.

None of this negates the technology’s potential. The same WSJ investigation showed AI cutting radiology report turnaround from 75 seconds to roughly 45 at one hospital network, and helping another recover millions in wrongly denied insurance claims. The evidence is genuinely mixed. But America’s public conversation weights the downside more heavily than China’s does.

The Paradox Neither Side Has Solved

A study cited during a recent conversation between Bill Gates, Microsoft Research president Peter Lee, and an OpenAI research lead captures the core tension.

Physicians diagnosing alone: 75 percent accuracy. AI diagnosing alone: 90 percent. Physicians using AI as an aid: 80 percent.

The combination underperformed the machine by itself. The most likely explanation: doctors second-guessed the AI when it was correct and deferred to it when it was wrong. The collaboration introduced noise rather than removing it.This finding matters equally in both countries. China is scaling access to AI-assisted medical reasoning at unprecedented speed. America is integrating AI into clinical workflows with unprecedented care. Neither has cracked the human-AI interaction problem. The technology outperforms a physician in controlled settings. Putting the two together does not reliably produce better results.

China’s risk is reaching massive scale before this problem is understood. America’s risk is moving so slowly that the benefits never reach the people who need them most — the uninsured, the rural, the overwhelmed. Seven out of ten health conversations on ChatGPT already happen outside clinic hours. Patients are not waiting for the system to be validated. They are using it because the available alternative is worse.

What the Gap Reveals

The divergence is not really about models. Chinese and American AI systems now perform comparably on medical benchmarks. Baichuan Intelligence claims its purpose-built M3 model outscored GPT-5.2 on OpenAI’s HealthBench evaluation, with a reported hallucination rate of 3.5 percent. Whether those numbers hold up under independent scrutiny is an open question, but the direction is clear: the model gap for healthcare-specific tasks has narrowed sharply.

The divergence is structural. It reflects differences in healthcare architecture, regulatory logic, liability culture, and tolerance for iteration.

China’s system is centralized enough that a single app can plug into thousands of public hospitals through standardized interfaces. The US system is fragmented across private insurers, competing hospital networks, and incompatible medical record platforms. OpenAI needed a third-party intermediary just to aggregate patient data across institutions. Ant Group built the pipeline in-house.

China’s regulatory environment allows rapid consumer deployment with oversight applied after the fact. America’s system demands pre-deployment compliance, institutional review boards, and contractual liability allocation. Both carry costs. China risks harm at scale before safeguards catch up. America risks confining the technology to institutions wealthy enough to navigate the compliance machinery — the opposite of the people who would benefit most.

The liability question exposes the sharpest divide. In the US, “who is responsible when AI gives bad medical advice?” is a question that triggers immediate legal calculation. Malpractice frameworks exist but have not been adapted for AI. In China, the legal landscape is equally undeveloped, but institutional tolerance for learning through deployment is higher. This is the same dynamic that allowed Chinese autonomous vehicle companies to accumulate hundreds of millions of real-world test kilometers while American counterparts were still negotiating permits.

What to Watch

Clinical evidence. Both countries are now generating real-world data on AI health tools at scale. The first rigorous studies comparing patient outcomes with and without AI assistance will likely emerge within the next year. Boston Children’s Hospital is running one. Chinese institutions, with their volume advantage, could publish sooner. These results will either accelerate global adoption or trigger regulatory contraction.

The physician battleground. Consumer health assistants dominate the headlines, but the more consequential competition may be for physician adoption. In early 2026, Ant Group, JD Health, and Alibaba Health each announced AI tools built specifically for doctors. If AI can cut a physician’s administrative load by even a third, the capacity effect in an overstretched system is enormous. The companies that win doctors will build the most defensible franchises.

The first major incident. US states have begun enacting laws that restrict AI chatbots from offering mental health or therapeutic guidance. China has issued guidelines but not imposed hard limits on consumer AI health products. The first widely publicized case of serious patient harm linked to an AI health tool — in either country — will reshape the regulatory conversation overnight.

The scale of unmet demand is no longer in question. Over 200 million health-related queries hit ChatGPT every week. Afu fields 10 million questions a day. People want this technology in its current, imperfect state. The open question is whether the transformation will be governed by the speed of deployment or by the speed of understanding what deployment actually does.

China is betting on the former. America is betting on the latter. Neither bet is obviously wrong.