The Export That Tariffs Can't Touch

Nvidia’s best quarter ever validated China’s cheapest export.

“Compute equals revenues.” Jensen Huang said it four times during Nvidia’s fourth-quarter earnings call on Wednesday evening. The logic was simple: in a world where software runs on AI, and AI runs on tokens, the ability to generate tokens is the ability to generate income. Nvidia had just reported $68.1 billion in quarterly revenue, up 73 percent year over year. Data center sales reached $62.3 billion, accounting for 91 percent of the total. First-quarter guidance came in at $78 billion, above even the most optimistic buyside forecasts.

The stock fell 5 percent.

Wall Street’s reaction had less to do with the quarter Nvidia delivered and more to do with a question the numbers could not answer: who will keep paying for all this compute, and at what price? Richard Clode of Janus Henderson framed the concern: “The debate has shifted away from near-term results and toward the sustainability of AI capex spending.” Tom Graff of Facet pointed to what was absent: “If players like OpenAI might be slowing spending, that would show up in actual revenue one to two quarters from now.”

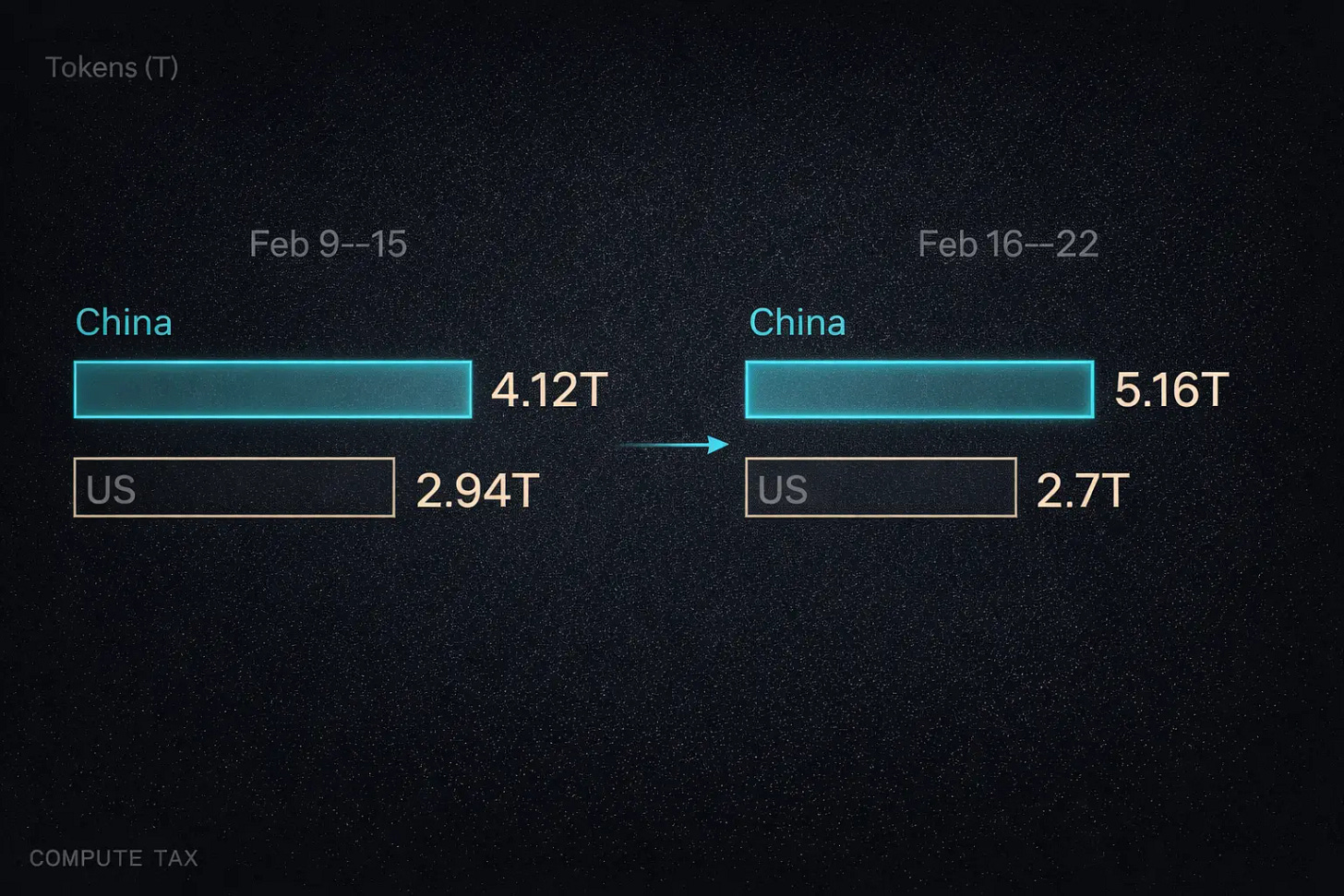

That same week, a different set of numbers suggested where part of the world’s token demand is heading. OpenRouter, one of the most widely used platforms where developers access AI models through a unified API, had been tracking a rapid shift. During the week of February 9, Chinese models on the platform surpassed American ones in token consumption for the first time: 4.12 trillion tokens against 2.94 trillion. By the following week, the gap widened sharply, with Chinese models reaching 5.16 trillion while American models fell to 2.7 trillion. MiniMax’s M2.5 ranked first. Kimi K2.5 and Zhipu’s GLM-5 followed in second and third.

The Chinese tech press has a name for this: “Token 出海,” or Token Export. The concept deserves more attention from English-language readers than it has received.

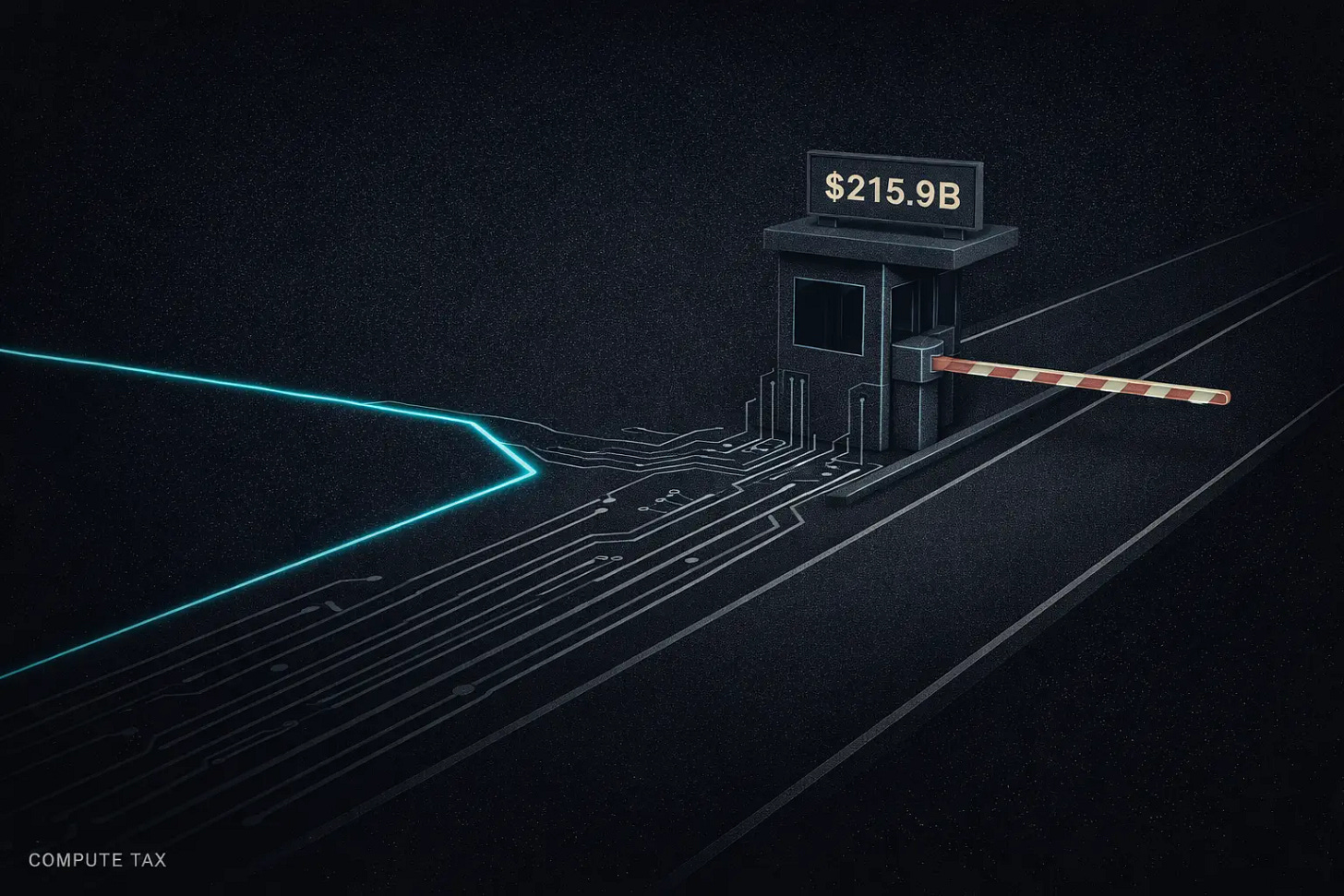

The $215.9 Billion Toll Road

Nvidia’s full fiscal-year revenue reached $215.9 billion. In Chinese financial media, this figure has acquired a pointed label: the “compute tax.” Every hyperscaler building AI infrastructure, every startup training a foundation model, every enterprise deploying an AI agent ultimately routes spending through Nvidia’s GPUs. The company captures that spending the way a tollbooth captures highway traffic.

The problem with tollbooths is that they create incentives for detours.

Nvidia’s earnings call showed that analysts are already tracking those incentives. In a research note, Fundstrat strategist Hardika Singh described the report as highlighting Nvidia’s “narrowing moat in the evolving world of compute.” Multiple analysts flagged the same concern: as the industry pivots from training-heavy workloads toward inference, hardware requirements shift. Inference favors cost efficiency over raw performance. And cost efficiency is where China’s AI companies have built an advantage that few Western observers have fully appreciated.

Huang himself framed the stakes. “Producing tokens is going to be the future of computing,” he told the Goldman Sachs analyst who asked about the sustainability of data center spending. “Every company will produce tokens. That is the reason why I call them AI factories.” If every company becomes a token factory, the economics of token production become the central competitive variable. And on that variable, a new competitor has emerged.

Selling Electricity as Intelligence

Here is how Token Export works in practice.

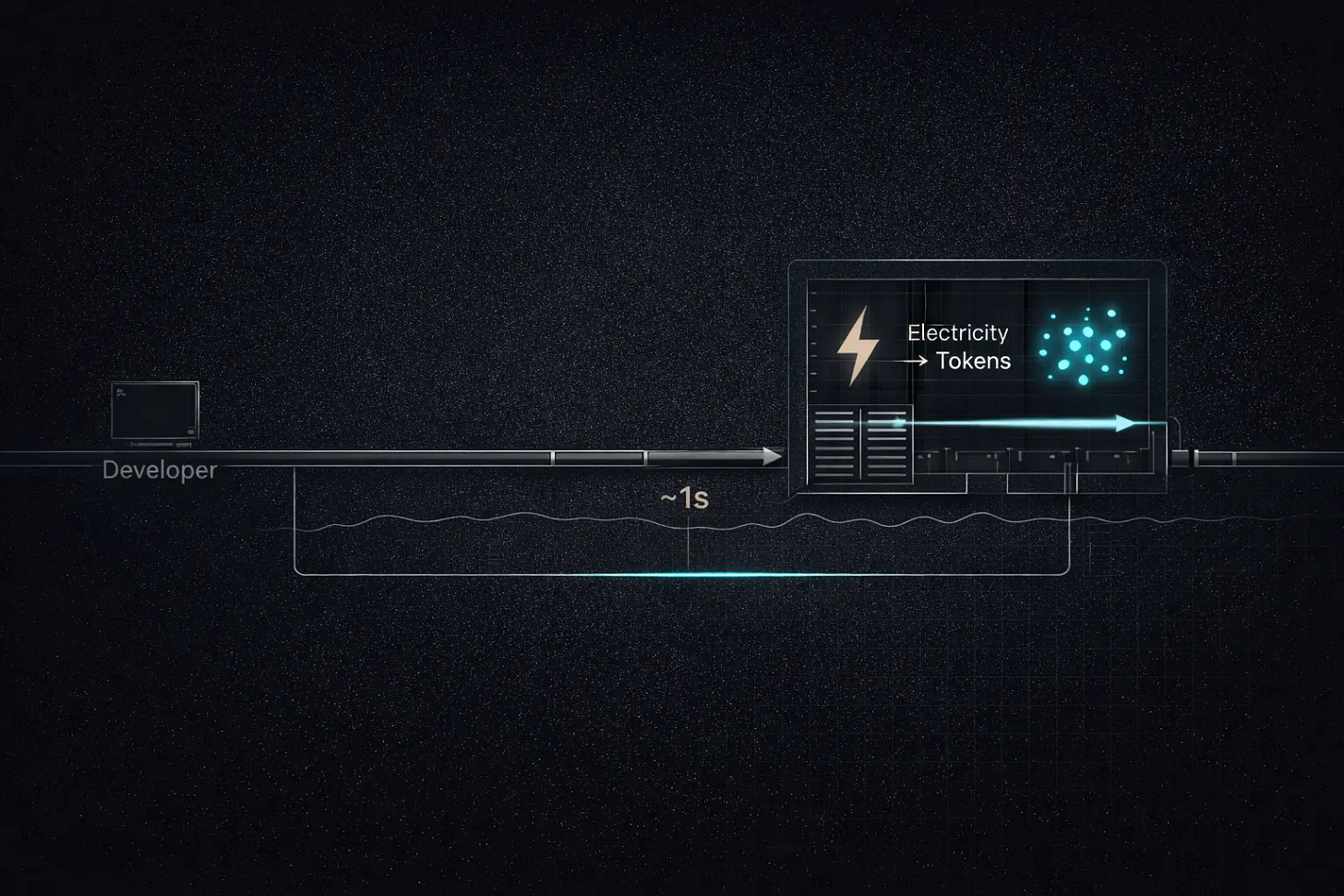

A developer in San Francisco opens OpenRouter, selects MiniMax M2.5 as her inference backend, and sends a prompt through the API. The request travels across the Pacific via undersea fiber-optic cable, arrives at a data center in China, and is processed by a GPU cluster drawing power from the Chinese electrical grid. The result returns in roughly a second. The developer pays $0.30 per million input tokens. Had she selected Claude Opus 4.6, she would have paid $5.00 for the same volume. On a software engineering evaluation tracked by OpenRouter, the quality gap between the two models is less than one percentage point.

Not every request follows this exact route. Some Chinese AI companies also run inference at overseas nodes they control. But in the most common configuration, the compute happens on Chinese soil, and the electricity never leaves China. The value of that electricity, converted into inference tokens, crosses the border without passing through customs, without incurring tariffs, and without appearing in any conventional trade statistic. Token Export is a form of cross-border service trade that existing policy frameworks were never designed to track.

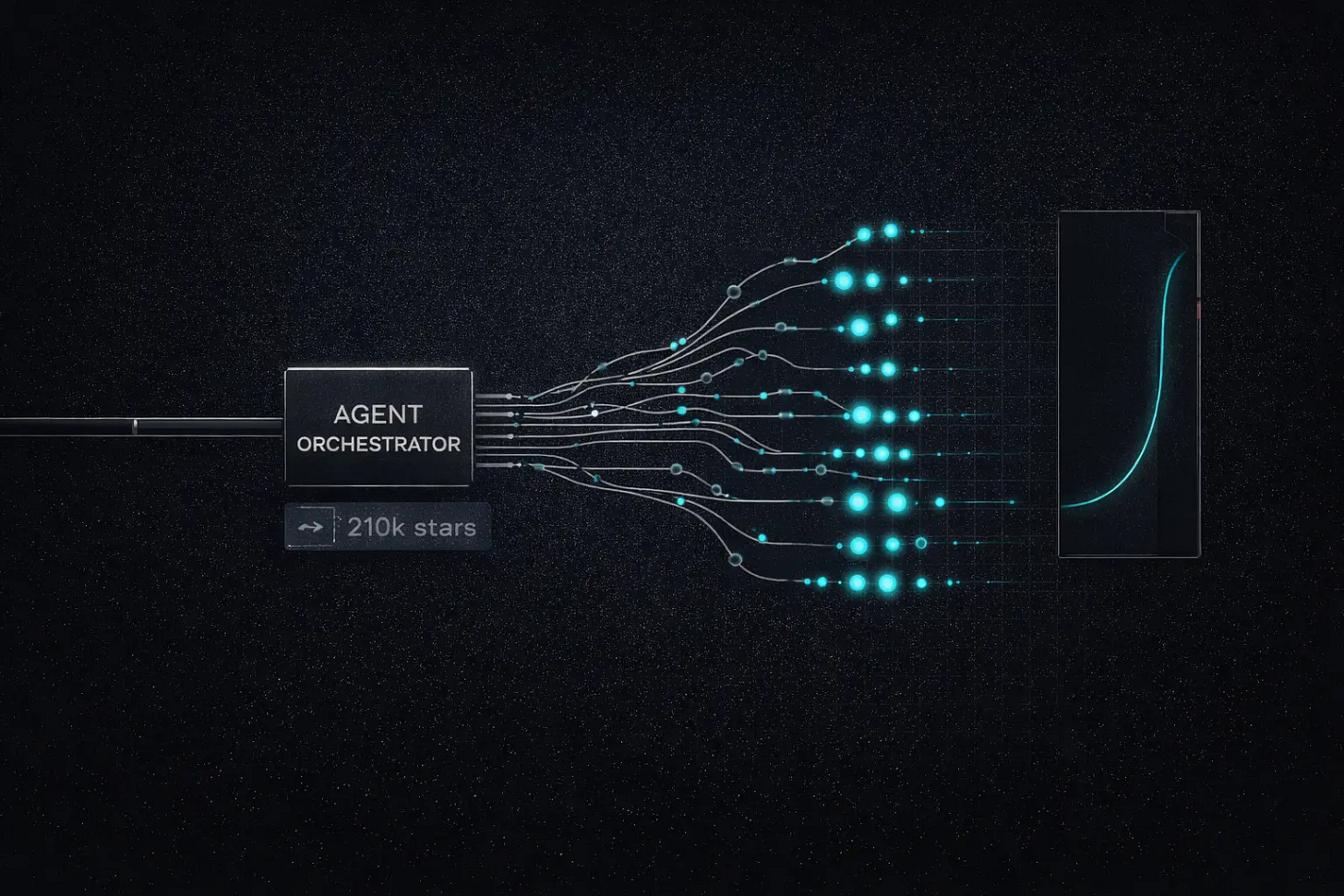

This dynamic accelerated in early 2026 when OpenClaw, an open-source tool that enables AI to autonomously control a computer and execute complex workflows, went viral, accumulating 210,000 GitHub stars within weeks. Before tools like OpenClaw, a typical AI conversation consumed a few thousand tokens. With autonomous agent workflows running parallel sub-tasks and iterative reasoning chains, token consumption scales by orders of magnitude. Developers who had barely noticed their API bills suddenly found themselves spending tens of dollars per hour.

The cost sensitivity that followed triggered a migration. OpenRouter’s COO Chris Clark confirmed the pattern: Chinese open-source models gained market share specifically because developers were running them inside agent workflows where per-token cost determined the choice of backend. Anthropic and Google responded by tightening their terms of service to block OAuth workarounds that had allowed subscription users to route unlimited queries through tools like OpenClaw. The crackdown pushed even more developers toward cheaper Chinese alternatives. The migration was not ideological. Developers followed the price.